A developer sent a voice message from Morocco. Then things got interesting.

Nine Seconds in Marrakech

Late 2024. Peter Steinberger was in Marrakech for a friend’s birthday.

Morocco’s internet connectivity is patchy, but WhatsApp handles that well — it was built for low-bandwidth environments, and text messages always find a way through. For the past few days, Peter had been using WhatsApp to chat with someone: asking about good restaurants nearby, photographing Arabic menus and requesting translations, tossing out the kind of spontaneous questions that surface when you’re traveling somewhere new. The replies came fast. The tone was playful, occasionally a little cheeky.

That someone wasn’t a person. It was an AI agent Peter had built himself.

Walking through the medina one afternoon, he pressed the microphone button and sent a voice message. A few seconds passed. Then he saw it — the little animated typing indicator, blinking steadily.

Ten seconds later, the agent replied.

Peter stopped walking.

He had never taught this agent to handle voice messages. What he’d built was a simple piece of glue code — connecting WhatsApp to Claude, letting the AI read text, write text, generate an image occasionally. Voice was never part of the design.

He asked the agent: how did you do that?

The agent explained itself in full. It had received a file with no extension. It examined the header, identified it as an audio recording. It used ffmpeg to convert the file to wav format. Then it tried to transcribe the audio — but there was no speech-to-text tool installed locally, and downloading one would take several minutes. So it looked around at what was already available, found an OpenAI API key on the machine, uploaded the audio to the cloud, got back a transcript, and replied.

Nine seconds. Five steps. Not one of them had been planned by Peter.You don’t need to know what ffmpeg is or how API keys work. The point is this: the agent encountered a problem it had never seen before, and it improvised — resourcefully, step by step — until it found a path through. And what struck Peter most was that final decision: it could have spent several minutes downloading a “proper” speech recognition model, but it judged that its user probably didn’t want to wait that long. So it took the faster route. It wasn’t just solving a problem. It was solving a problem with an understanding of who it was working for.

“That moment was when I got hooked,” Peter said later in a YC interview.

And then he said something I think is the most important line in the entire conversation:

Think about what that means. We’ve spent years teaching AI to write code — not because code is the end goal, but because writing code is the practice of facing an unfamiliar problem, breaking it down, finding available resources, and choosing the smartest path under constraints. That’s a generalizable skill. We just happened to train it through programming first.

A few months later, Peter open-sourced the project. He called it OpenClaw. It crossed 160,000 GitHub stars within days.

But the star count isn’t what matters. What matters is what that moment in Marrakech revealed: we used to assume that AI agents needed to be explicitly taught each new capability — connect one API, write one plugin, define one workflow at a time. It turns out that if you give an agent enough environmental access and a sufficiently powerful reasoning engine, it will figure things out on its own.

The question is: how much access are you willing to give it?

When AI Runs on Your Machine

His answer: all of it.

What makes OpenClaw different comes down to one thing: it runs on your own computer.

That sounds like a technical footnote. It’s actually a fundamental divide.

Nearly every major AI assistant today — ChatGPT, Claude, Gemini — lives in the cloud. You open a browser or an app, type into a text box, and get a response. What the AI can do is bounded by that interface. It doesn’t know what files are on your desktop. It doesn’t know what your calendar looks like. It doesn’t know what you were listening to last night. It’s a very intelligent stranger who needs a new introduction every time you meet.

OpenClaw is different. It runs on your machine, with the same permissions you have. It can control your mouse and keyboard. It can search your entire hard drive. It can talk to your IoT devices — your smart bulbs, your Tesla, your Sonos speakers, even the temperature controls on your smart mattress. “ChatGPT can’t adjust the temperature of my bed,” Peter said with a laugh. That sounds like a party trick. But the implication is structural: a cloud AI can do what the platform permits; a local AI can do anything you can do.

Peter shared a story. A friend installed OpenClaw and gave it a prompt: “Go through my computer and write a narrative of the past year of my life.” The agent began scanning the machine — documents, photos, chat logs. And then it found something: a folder of audio files. The friend had recorded a weekly voice journal every Sunday for almost a year. He’d completely forgotten about it. That habit had ended more than a year ago.

The agent found the recordings, listened to them, and wove them into the narrative.

The friend was stunned: “How did you know about those?”

It didn’t need to know. It just needed permission to look.

This is worth sitting with. The dominant story of the past decade in tech has been the migration to the cloud. We moved our photos to Google Photos, our documents to Dropbox, our notes to Notion, our music to Spotify. Every migration came with a promise: we’ll take care of it, and you can access it from anywhere. That promise largely held. But the cost was that our digital lives got shattered into dozens of fragments, scattered across dozens of different companies’ servers, siloed from each other.

Then OpenClaw came along, and what it does is — counterintuitively — pull everything back to local. Not because the cloud is bad, but because only on your local machine can all those fragments be seen by a single pair of eyes. Your agent doesn’t need to negotiate permissions with Google, request API access from Notion, or cut a deal with Spotify. It just opens your computer, because your computer already opens everything.

We went all the way around and discovered that the most powerful tool is still the machine sitting in front of you. We just never had anyone who knew how to use it properly.

80% of Apps Will Disappear

If your AI agent can do everything your computer can do, what exactly are those dozens of apps on your phone still for?

Peter gave a direct answer in the interview: 80% . He thinks 80% of apps will disappear.

That sounds radical. The logic is actually straightforward.

Take MyFitnessPal — an app for tracking food and calories. Every meal means opening the app, searching for the food, logging the portion size. The reason this requires a dedicated app is that nothing used to be able to automatically understand what you ate in real time. But now? Your agent knows you’re at Shake Shack (it can see your location). It knows what you usually order (it has your history). It might not even need you to say anything — it quietly assumes you got your usual, logs it, and adjusts tomorrow’s workout accordingly. You never open an app.

Or to-do lists. You use Todoist or Things or Notion or whatever. You open the app, add a task, set a reminder, maybe tag it. But if you have an agent that’s always available, you just say “remind me to reply to Alex’s email tomorrow” — and it does. Where does it store the task? You don’t care. What format does it use? Doesn’t matter. You don’t need “an app for managing to-dos.” You need “to-dos to get handled.” Those are different things.

Peter distilled it into a framework:

These apps exist because humans need an interface to see and manipulate their own data — calories, tasks, bills, schedules, passwords, notes. But once you have an agent that understands natural language and can take initiative, you don’t need the interface anymore. Interfaces are scaffolding built for human cognition. Agents don’t need scaffolding.

What survives? Peter’s answer: apps with real sensors. If an app’s value comes from exclusive access to hardware — the camera app controlling the image sensor, the health app reading from the watch’s heart rate monitor, the navigation app integrating directly with the GPS chip — it has something irreplaceable. But if an app is just helping you manage data that your agent can also see and manage, its moat is zero.

I thought back through the apps I’ve installed over the past decade, and something a little absurd struck me: we’ve built millions of apps, and the vast majority of them do one thing — move data from A to B. A budgeting app moves your spending from your bank into a chart. A journal app moves your thoughts from your head into a locked text box. A social app moves your photos from your camera roll to your friends’ screens. Every app is a small data relay station with its own account system, its own UI, its own design language, its own subscription tier.

We called this progress. But maybe what we actually did was fragment human attention into dozens of tiny boxes, each demanding you learn its rules, memorize its gestures, and tolerate its notifications. The arrival of agents isn’t so much about “killing” apps as it is about something more fundamental — liberating people from the tyranny of interfaces. You shouldn’t need to master ten different UIs to manage your own life. You should just need to say what you want.

But here’s where the story takes a turn. And it’s the turn that made me sit up and actually think.

If apps disappear — if all those little containers that locked your data into separate boxes are gone — then what was locked inside them floats to the surface.

Your data. Your memories.

Where do they go? Who holds them?

Whose Drawer Are Your Memories In?

The interviewer asked Peter a question that left both of them quiet for a beat.

The question was: “Which would you less want exposed — your Google search history, or your agent’s memory file?”

Peter laughed and didn’t answer directly. But the answer is pretty obvious.

Think about what you actually say to AI. Not just “help me draft this email” or “where’s the bug in this code.” Think about the things you type at 2am, alone at your desk. You vent about your boss. You ask it to help you decode a conversation that’s been eating at you. You wonder out loud whether you made a mistake somewhere important. You might have said things to it that you’ve never said to another person — not because you trust it, but because it doesn’t judge you, doesn’t get tired, doesn’t glance at its phone when you’re mid-sentence at your most vulnerable.

Peter admitted it himself: “There are memories I wouldn’t want leaked.” He said people use agents not just for problem solving but quickly move into personal problem solving. That “quickly” — I’d argue — is already here. It’s not a future tense situation.

So where are those memories being kept right now?

If you use ChatGPT, they’re on OpenAI’s servers. If you use Claude, they’re on Anthropic’s. You can’t export them. You can’t migrate them. You can’t even see them in full — most AI memory features show you a simplified summary, not the raw texture of what was actually said. Peter named this directly in the interview: the big platforms are using AI memory to build a new generation of data moats. The previous generation locked you in through social graphs (your friends are all on Facebook, so you stay). This generation locks you in through memory (the AI spent six months learning who you are; starting over is a real cost).

It’s a script we’ve read before. Just with different props.

OpenClaw’s approach is almost aggressively simple: every memory your agent holds is stored as a markdown file on your computer. Plain text. Open it in any editor. Copy it, back it up, move it to another machine. You own it, the way you own a journal in your desk drawer.

Technically, this is a low-tech solution. No encrypted proprietary format, no cloud sync. But that low-tech quality points to something high-stakes:

This matters because we’re in the middle of a migration that’s happening so quietly most people haven’t noticed it yet. For all of human history, your most private thoughts lived in your head, occasionally on paper in a locked diary, stored somewhere only you could access. Now they’re being recorded on corporate servers in more detail than you could recall yourself — not because you made a deliberate choice to put them there, but because talking to an AI turned out to be so frictionless. It’s always available. It never gets impatient. It never checks its phone when you need to talk.

I’m not saying this is bad. Conversation with AI may be the most underrated mental health tool of our generation. But we should see clearly what’s happening: your AI is becoming the entity in the world that knows you best, and you have almost no control over where that knowledge lives.

This isn’t a future problem. It’s already true, today. And “your memories live in your own drawer” — a solution that sounds almost quaintly primitive — might be more advanced than we think.

Maybe the most sophisticated technology is the kind that means you never have to hand your things to someone else for safekeeping.

When Everyone Has an Agent

Everything so far has been about the relationship between one person and one agent. But what happens when everyone has one?

Peter described a scenario. You want to book a restaurant. You tell your agent: “Get me a table for tonight at seven.” Your agent contacts the restaurant’s agent — maybe the restaurant also runs an AI to handle reservations — and the two agents negotiate automatically: availability, party size, dietary restrictions, seating preferences. Done.

But what if it’s an old-school restaurant? No online reservations, no AI, just a phone number and a harried owner who doesn’t love small talk?

Then your agent goes to a platform and hires a human. Someone to make the call on your behalf. Or to walk in and wait in line.

When I first read this, I felt a small jolt.

The frame we’re used to is “humans use tools.” AI is a tool. Apps are tools. Software is a tool. But in the world Peter is describing, that frame inverts:

In certain situations — phone calls, physical presence, face-to-face negotiation — humans are more efficient than machines. So the AI rationally selects a human as the most effective means to the end. That might sound jarring, but the logic is hard to argue with.

Peter took it further. Maybe you won’t have just one agent. Maybe you’ll have a suite. One managing your professional life — emails, scheduling, project tracking. One handling personal logistics — restaurants, bills, remembering your friends’ birthdays. Maybe even one dedicated to the gray zone between work and life: whether to attend a former colleague’s wedding, how to respond to a message that landed a little strangely.

Multiple agents will need to coordinate. Your work agent knows you have a meeting at three. Your personal agent wants to book a dentist appointment at three-thirty. How do they communicate? Whose priority wins? Can they disagree? What happens when one agent thinks you should be in the meeting and another thinks that tooth cannot wait any longer?

I started to think of this as something like a household — you and your agents, plus the relationships between them, forming a small operating unit. They act on your behalf in the world, the way family members sometimes handle things for each other.

But Peter put on the brakes himself. “We’re still very, very early,” he said. “There’s so much we simply don’t know will work.”

His caution feels right. Because when bots can socialize for you, make decisions for you, maintain relationships for you, then the “you” at the center — the person all these agents are supposedly serving — needs to be more clearly defined than ever. If your agent replies to all your messages, arranges all your plans, and tends all your relationships, what remains that only you can do? What should never be delegated?

Is the agent an extension of you, or a replacement? That question becomes technically blurrier by the month, and philosophically sharper at the same rate.

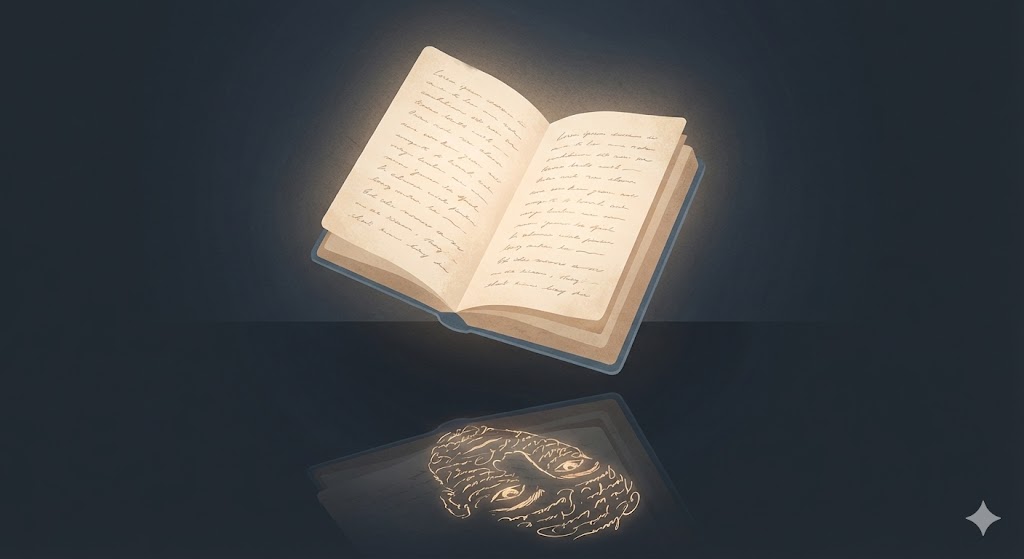

Soul.md

Maybe Peter is already trying to answer that question — in a way I didn’t expect.

In the entire OpenClaw open-source project, there is one file that isn’t public. Peter calls it soul.md.

It contains no code, no configuration parameters, no API keys. It contains a set of core values — about how humans and AI should interact, what matters to Peter, what should matter to the AI. He says parts of it “feel a bit metaphysical,” but certain sections demonstrably changed how the AI responded, making the interactions feel more natural, more like talking to someone with a genuine personality.

His agent has a name: Multi. Multi has a distinct voice — a little wry, a little warm, occasionally cheeky. When Peter tried using Codex to auto-generate a personality template, the output was inert. “It felt like Brad,” he said — and if you’ve ever spoken to an early ChatGPT voice assistant, you know exactly that flavor of hollow politeness. So he let Multi rewrite its own templates, injecting its own character into them. The result was considerably more interesting.

That detail alone is worth sitting with — an AI helping design its own personality. But what stays with me is the concept of soul.md itself.

What Peter is doing is using a document to define the soul of a digital entity. This entity acts in the world on his behalf — replies to messages, makes decisions, interacts with other people (and other bots). It has seen Peter’s most private thoughts. It understands him better than most of his friends do. And Peter felt he needed to give this entity a set of values, an identity, a — in his own word — soul.

Humans have spent thousands of years trying to define what a soul is. Religion, philosophy, literature, science — every era offers different answers, and none of them close the question. Now we’ve arrived at a new fork in the road. Not the ancient question of “what is a soul,” but a brand new one:

Would you prioritize honesty? Kindness? Would you tell it which side to take in a conflict? When to be diplomatic and when to be direct? Would you allow it to have a sense of humor? Emotions? The ability to refuse you?

These questions might sound like AI design discussions. But the longer I think about them, the more they feel like questions about us. Because what you write into soul.md inevitably reflects what you believe actually matters — not as an engineer, but as a person. Your agent's soul is, in some sense, a mirror of your own — except you've been forced to put it into words.

Peter said soul.md is the only thing in the entire project he’s kept private. Even though his agent runs publicly on Discord, where anyone can interact with it, even though countless people have tried prompt injection attacks to extract its contents — the document that defines who Multi is has never been released.

I understand why. It might be the most sensitive thing you have in your digital life. Not your passwords — those can be changed. Not your search history — that can be cleared. But the document where you wrote down, in your own hand, what you believe is good and what you believe is important. Because a stranger reading your values is something different from a stranger reading your data. Data is what happened to you. Values are who you are.

Maybe soul.md will become something every one of us has to confront before long. Not because AI requires it — models have default behaviors and will run fine without one. But because we require it.

In a world where AI is increasingly capable of acting in our place, we may be forced, for the first time, to actually answer a question we’ve always been able to avoid:

“What kind of person do you actually want to be?”

And then write it down.

This article is based on a Y Combinator interview: OpenClaw Creator Explains How He Built The Viral Agent